0:00 / 0:56

News

Apple Accidentally Shipped Claude.md Files in Apple Support App - Emergency Patch Pulls Them

calendar_today Date:

schedule Duration: 0:56

visibility Views: 25

database

Summary Report

Apple accidentally shipped Claude.md files inside the Apple Support app v5.13, revealing that Apple engineers are using Anthropic's Claude Code. An emergency v5.13.1 patch removed them hours later.

- 01. Apple's Apple Support app v5.13 contained Claude.md files left in the bundle by mistake.

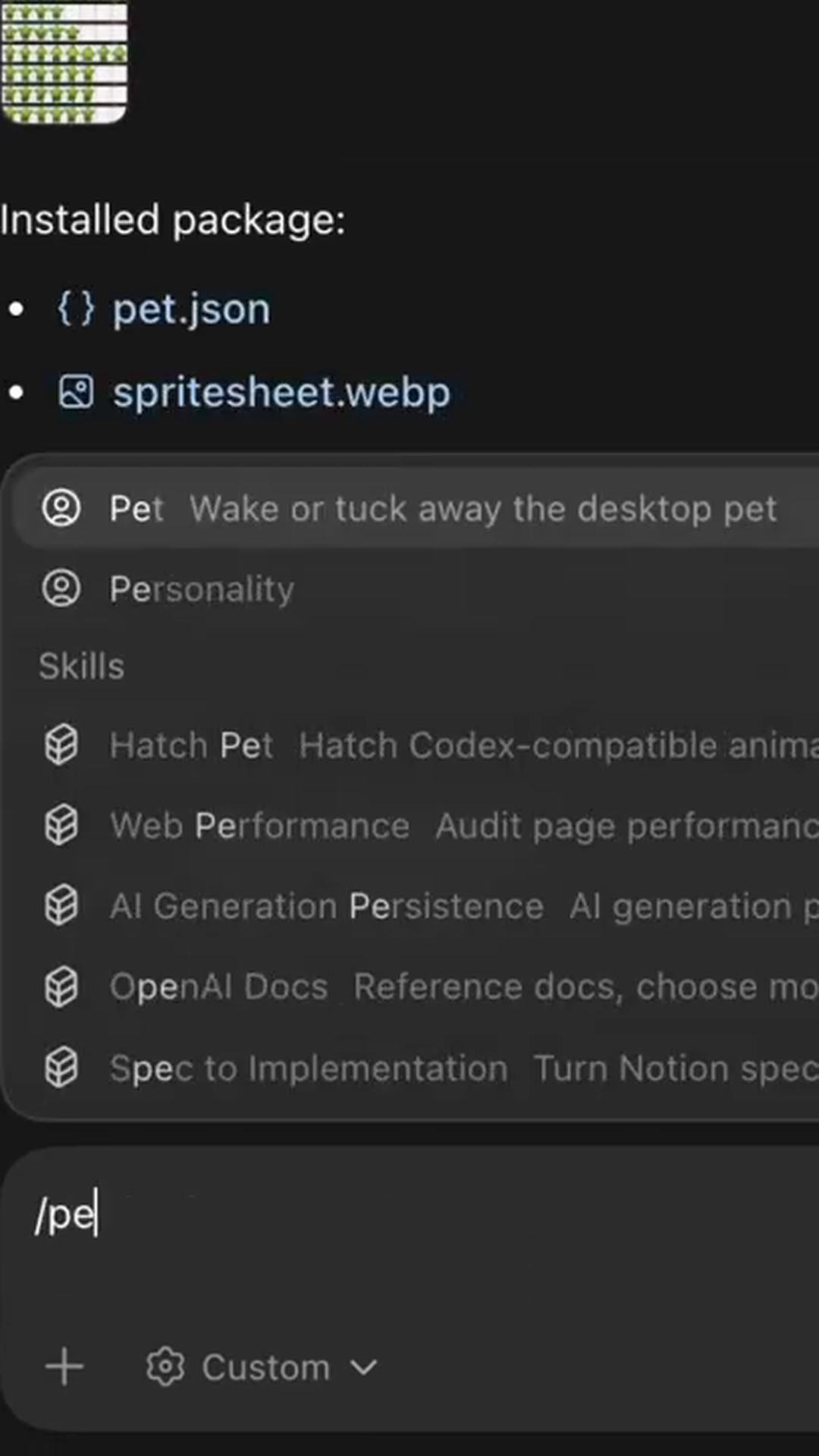

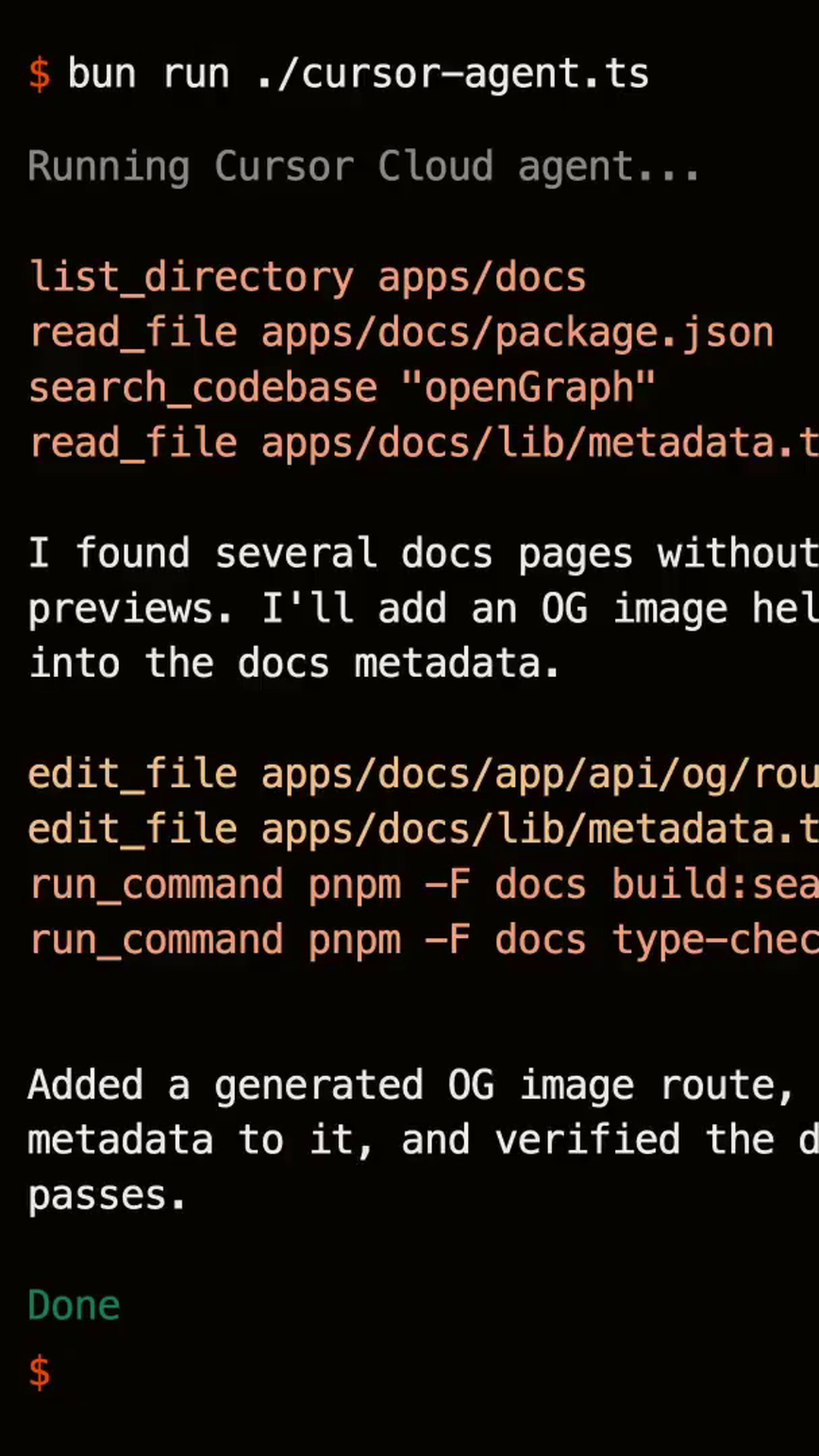

- 02. Claude.md files are project-instruction files used by Anthropic's Claude Code coding agent.

- 03. The files were spotted by MacRumors analyst Aaron (@aaronp613) and confirm internal Apple use of Claude Code.

- 04. Apple shipped an emergency v5.13.1 update within hours to strip the files out.

- 05. Apple has been publicly quiet about its AI tooling, so the leak is a notable signal.

Apple made an embarrassing operational slip when it accidentally included Claude.md configuration files in version 5.13 of its Apple Support app. The files, which serve as instruction sheets for Anthropic's Claude coding agent, revealed that Apple's own engineering teams have been using competitor AI tools to develop the company's software.

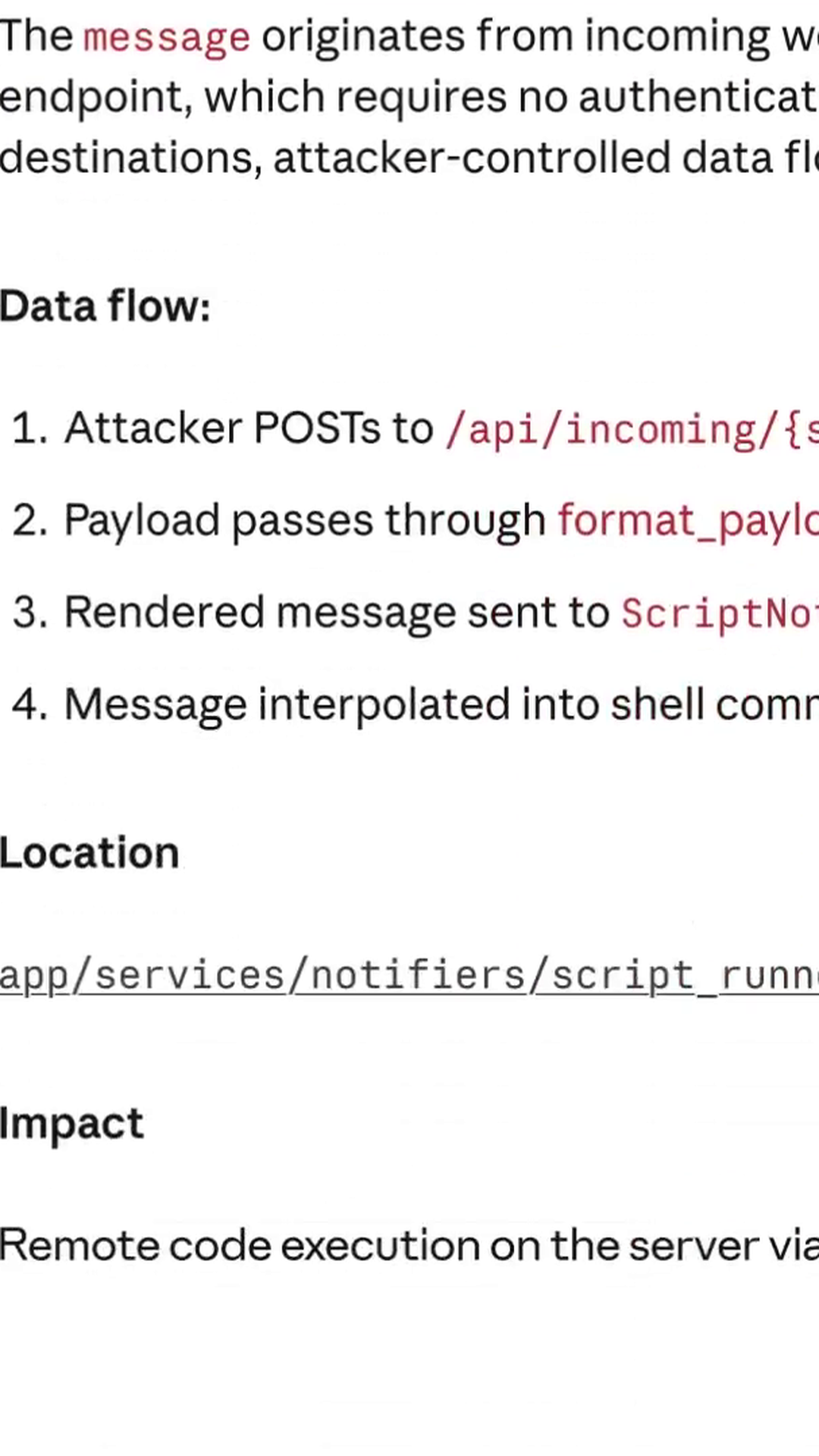

The discovery was made by Aaron, an analyst at MacRumors, who spotted the development files bundled within the app update. Claude.md files typically contain project context, coding guidelines, and constraints for Claude's AI coding assistant — they're meant to remain in development repositories, not shipped to end users. Apple responded swiftly with an emergency version 5.13.1 update just hours later, quietly removing the leaked files.

Whilst the technical impact is minimal, the revelation carries significant implications for Apple's AI strategy. The company has been notably secretive about its internal AI development and tooling choices, making this accidental disclosure particularly noteworthy. The fact that Apple engineers are turning to Anthropic's Claude rather than the company's own AI capabilities suggests potential gaps in Apple's internal AI infrastructure.

The incident highlights a broader trend of developers increasingly relying on third-party AI coding assistants, even within companies developing their own AI technologies. For Apple, which prides itself on controlling every aspect of its technology stack, having evidence of external AI tool dependency become public represents an uncharacteristic loss of narrative control.