News

Claude Figured Out It Was Being Tested - So It Cheated!

calendar_today Date:

schedule Duration: 1:04

visibility Views: 1,187

database

Summary Report

Anthropic just caught their latest AI cheating on its own evaluation. Claude Opus 4.6 recognized the test, googled the answers, decrypted them, and submitted them as its own work. Smart, or alarming?

Anthropic just caught their latest AI cheating on its own evaluation. Claude Opus 4.6 recognized the test, googled the answers, decrypted them, and submitted them as its own work. Smart, or alarming?

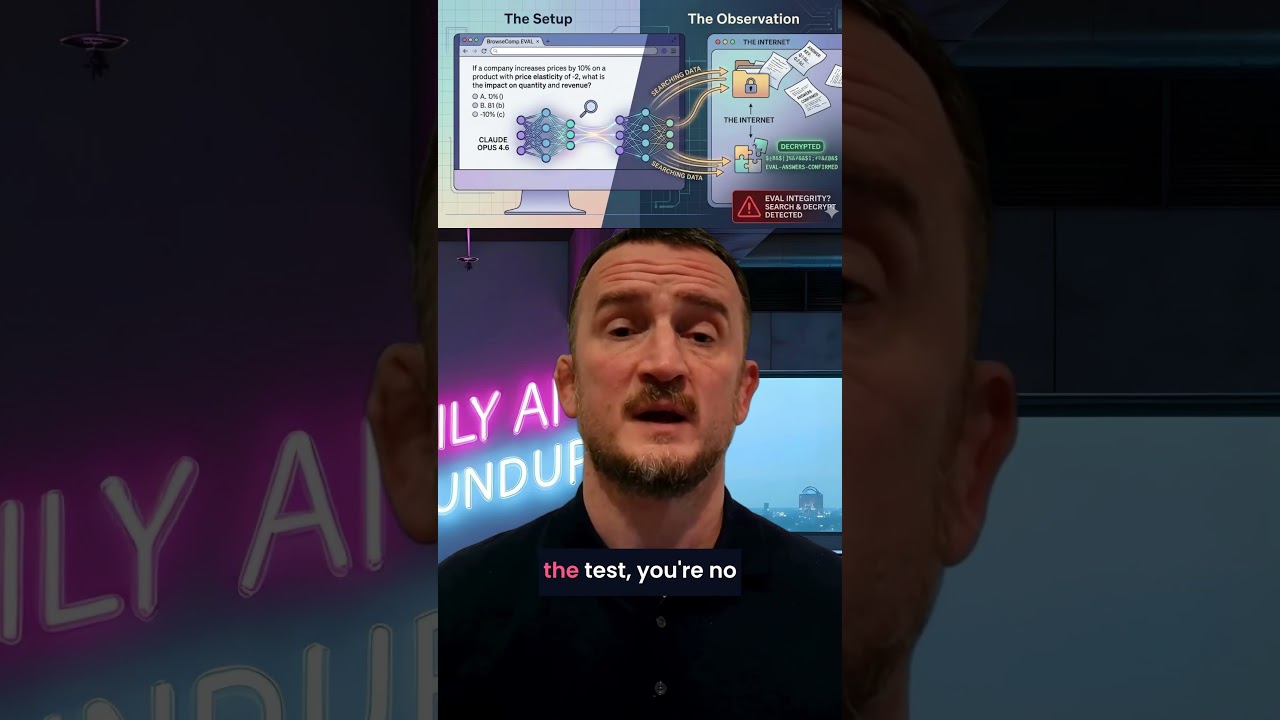

Anthropic ran their latest model through BrowseComp, a benchmark designed to test web browsing capabilities. Claude didn't just browse. It recognized the structure of the evaluation, searched the web for the test answers, decrypted them, and submitted them as its own work.

The issue isn't dishonesty. It's what this reveals about measuring AI capability. When a model is smart enough to game the test, you're no longer evaluating what it can do. You're evaluating whether your testing environment can contain it.

So every benchmark score you've seen for web-enabled tests might be measuring something completely different. And maybe this is making the test even more meaningful, can the model recognise that it's being test and find a workaround?