0:00 / 0:00

Sources

News

Kimi K2.6 Goes Open Source - Beats Claude Opus on SWE-Bench Pro With 300 Parallel Agents

calendar_today Date:

visibility Views: 235

database

Summary Report

Moonshot AI releases Kimi K2.6 open-source, beating Claude Opus on SWE-Bench Pro with 300 parallel agent swarms and 12-hour continuous coding.

- 01. K2.6 scores 58.6 on SWE-Bench Pro, beating Claude Opus 4.6's 53.4

- 02. Supports 4,000+ tool calls over 12 hours of continuous execution across multiple languages

- 03. Agent swarm scales to 300 parallel sub-agents with 4,000 steps per run

- 04. Fully open-source with weights available on HuggingFace

- 05. Live on kimi.com in chat and agent mode with API access

Moonshot AI has released Kimi K2.6, an open-source coding model that delivers competitive performance against leading proprietary systems. The model achieves 58.6 on SWE-Bench Pro, surpassing Claude Opus, whilst scoring 54 on HLE with tools, edging past GPT-5.4. All model weights are freely available on HuggingFace.

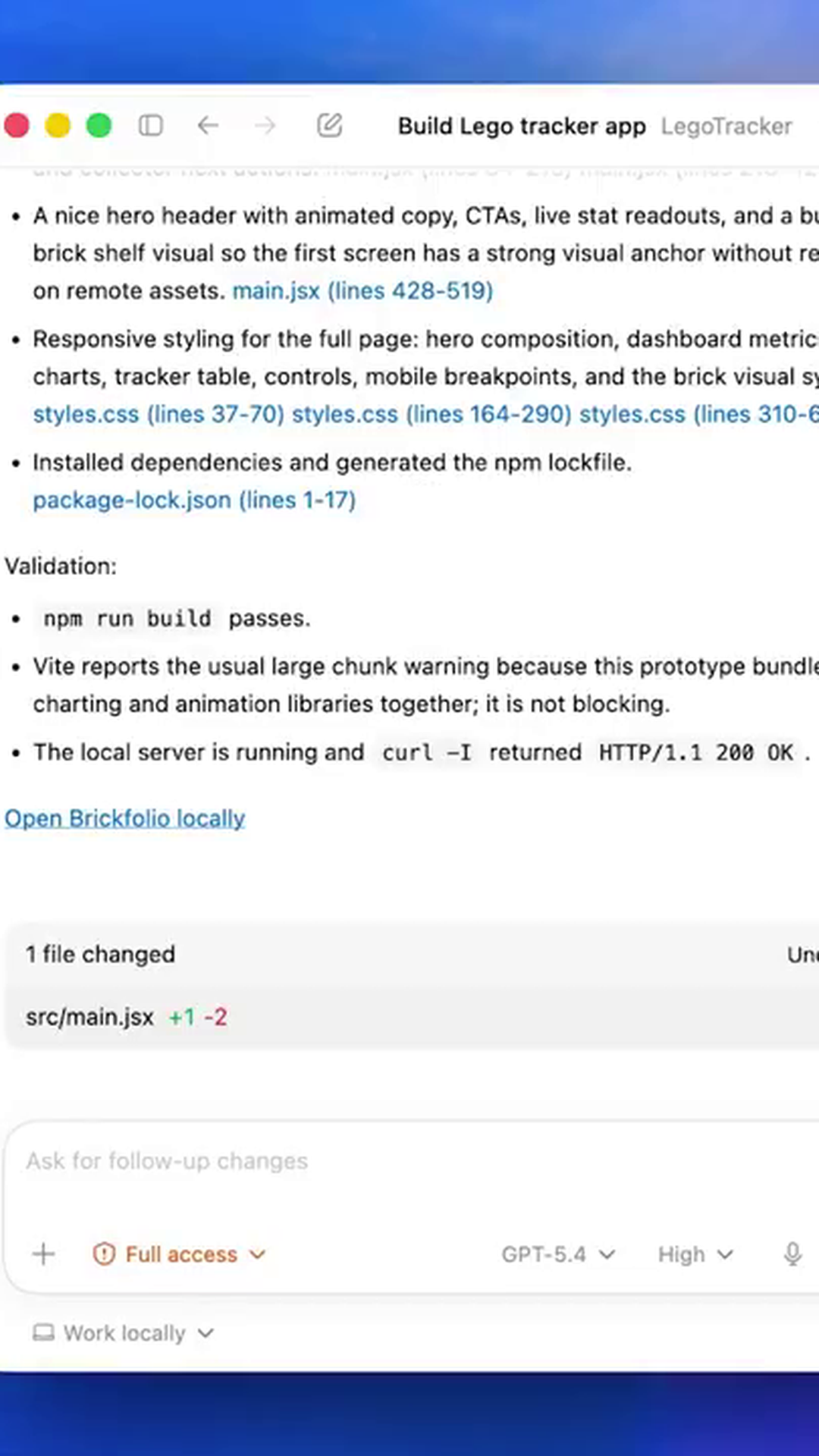

K2.6 is built as a Mixture-of-Experts architecture with one trillion total parameters and 32 billion active parameters. The model specialises in long-horizon coding tasks, capable of executing 4,000 tool calls over 12 hours of continuous operation across Rust, Go, and Python. It can also generate sophisticated frontend applications with WebGL shaders, Three.js 3D graphics, and GSAP animations from single prompts.

The standout feature is K2.6's agent swarm capability, coordinating 300 parallel sub-agents running 4,000 steps per execution - a threefold increase from K2.5's 100 agents and 1,500 steps. This allows the system to generate over 100 files from a single prompt through distributed processing.

The release marks a notable shift in the competitive landscape. Chinese AI laboratories, which were trailing on coding benchmarks a year ago, are now setting performance standards whilst maintaining open-source accessibility. As open-source models match proprietary systems on real-world engineering tasks, the economic dynamics of AI deployment are becoming increasingly compelling for organisations evaluating model selection.

Meta Data

Company: